Time lapse alignment : A comparison of Futura Photo and Futura Time Lapse

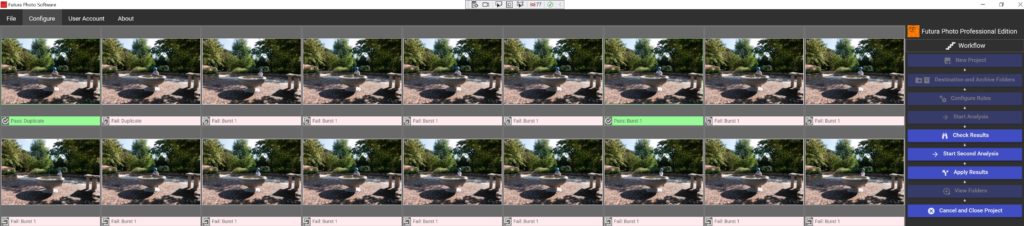

Introduction Alignment of time lapse members can be necessary for different reasons: Wind can shake the tripod, For “long term time lapse” (weeks if not more), someone/something can bump into the support of the camera, Time Lapse can be shot handheld. Example of Time Lapse aligned with Futura Photo Same Time Lapse, images not aligned …

Time lapse alignment : A comparison of Futura Photo and Futura Time Lapse Read More »