How to sync automatically photos shot by a mirrorless camera to your cloud provider, like a smartphone !

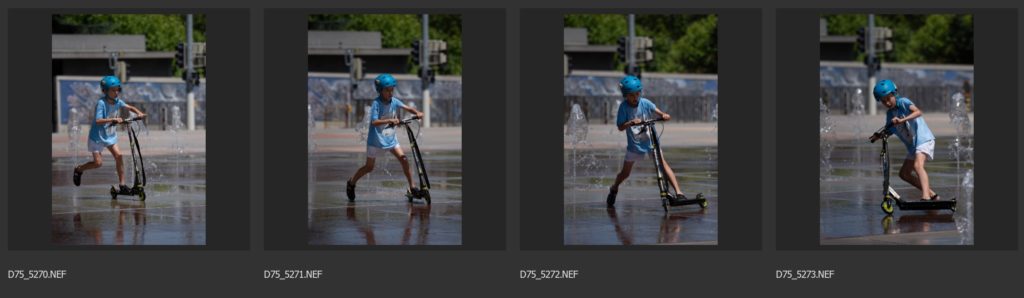

Summary You can now avoid downloading images from memory cards (CF /SD ….) to your computer by using your smartphone as a bridge to your cloud provider where you store your images. This article explains you how and what the limitations are. Introduction With your smartphone camera, you shoot, and the images can be automatically …