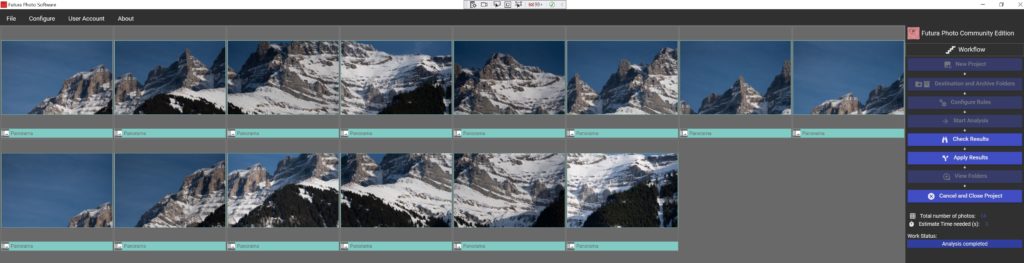

How AI Can Help The Culling Of Similar Images Right After The Photoshoot

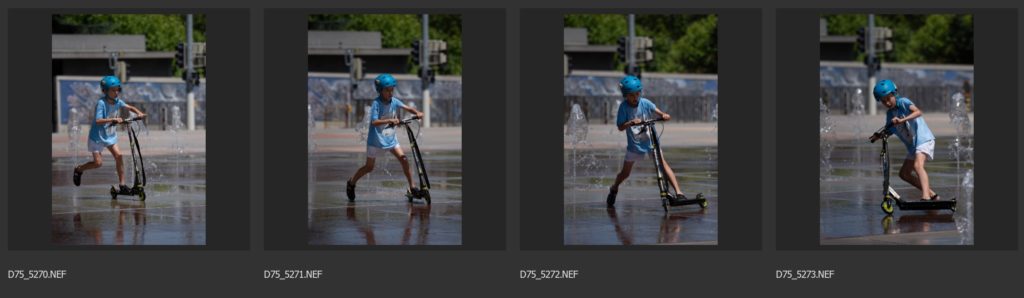

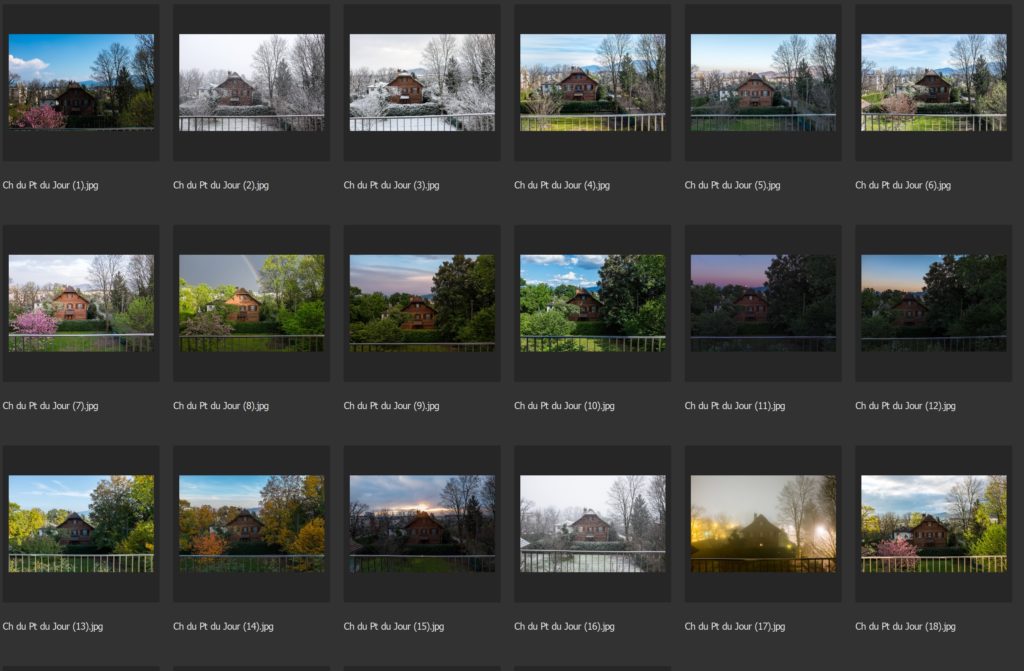

Introduction Why do we shoot too many images (good and bad reasons) ? Fear to miss out the right moment, burst mode, two similar images won’t cost you anything in a digital world. There are many reasons to shoot a lot of images per year. Some of them are good reasons, some not but it …

How AI Can Help The Culling Of Similar Images Right After The Photoshoot Read More »